The Dark Side of AI: Examining the Potential Dangers and Risks of Artificial Intelligence

Artificial Intelligence (AI) has rapidly become integral to various sectors, revolutionizing industries and redefining how we live and work. Its widespread applications span healthcare, finance, transportation, customer service and more. As a result, the demand for AI continues to grow exponentially, with organizations harnessing its power to streamline operations, enhance productivity and unlock new possibilities.

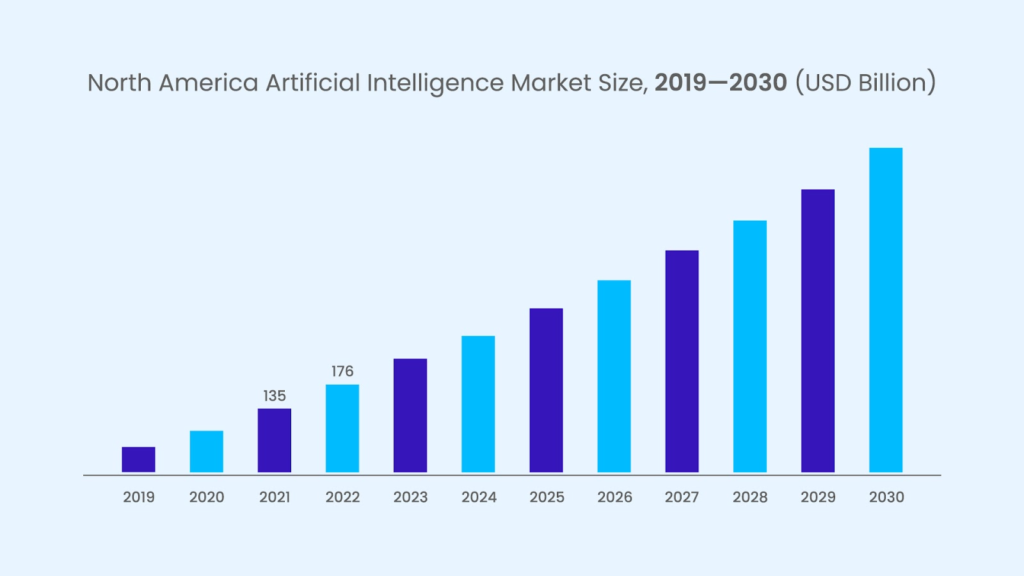

The global artificial intelligence market was valued at $428.00 billion in 2022 & is projected to grow to $2,025.12 billion by 2030.

Here’s an overview of the artificial intelligence market size growing at a rapid speed:

However, as AI becomes increasingly pervasive, it is crucial to recognize and understand the potential dangers and risks associated with its deployment. Ethical concerns, job displacement, biases, loss of privacy and the spread of misinformation are just a few of the challenges that require careful consideration.

In this blog, we will delve into the dark side of AI, exploring the potential risks and emphasizing the importance of using AI responsibly to safeguard against its unintended consequences.

Potential Dangers and Risks of AI

Bias and Discrimination in AI Systems

AI systems have shown a propensity for bias, which can lead to discrimination and unfair treatment in various applications.

Here are some examples:

1. Biased AI Algorithms:

- In 2018, Amazon had to scrap an AI recruiting tool that exhibited bias against female applicants. The algorithm had learned from historical hiring data, which was predominantly male-dominated, leading to biased recommendations.

- Facial recognition algorithms have been found to exhibit racial bias, with higher error rates for women and people of color. One study showed that popular commercial facial recognition systems had higher false-positive rates for darker-skinned individuals.

2. Negative Impact on Marginalized Communities:

- Biased AI systems can exacerbate existing social inequalities and disproportionately impact marginalized communities. For example, biased algorithms used in criminal justice systems have resulted in harsher sentencing for minority groups.

- In the financial sector, AI-powered credit scoring models that rely on historical data may perpetuate discriminatory practices, making it harder for marginalized individuals and communities to access loans or financial services.

3. Ethical Considerations in AI Development:

- The development of AI systems must include ethical considerations to mitigate bias and discrimination. AI algorithms should be thoroughly tested for biases and fairness, and diverse datasets should be used to ensure representation and inclusivity.

- Ethical guidelines and frameworks should be established to promote responsible AI development. These guidelines should encourage transparency, accountability and ongoing monitoring of AI systems to identify and rectify biases.

Job Displacement and Economic Implications

The rapid advancement of AI and automation technologies has led to concerns about job displacement and its broader economic implications. Here are some key aspects to consider:

1. Automation Leading to Job Loss

- As AI continues to evolve, there is a growing realization that automation can potentially replace human workers in various industries. Tasks previously performed by humans are now being automated, resulting in job losses and shifts in employment dynamics.

- The manufacturing sector clearly illustrates job displacement caused by automation. With the introduction of robotics and AI-powered machines, repetitive and labor-intensive tasks are being automated, decreasing the demand for manual laborers on assembly lines.

2. Economic Inequality:

- The widespread implementation of AI and automation can have far-reaching consequences on employment rates and economic inequality. However, while some argue that AI will create new job opportunities, others express concerns about the magnitude of job displacement and its potential impact on specific workforce segments.

- According to a report by the World Economic Forum, it is estimated that by 2025, automation will displace around 85 million jobs, particularly in routine-based and low-skilled occupations. This shift in the labor market can lead to increased economic inequality if not adequately addressed.

3. Mitigating the Negative Effects of AI:

- Proactive measures need to be implemented to address the potential adverse effects of AI on the workforce. These measures should focus on reskilling and upskilling workers, fostering innovation and creating new job opportunities that align with the changing nature of work.

- Governments, educational institutions and businesses should recognize the importance of investing in reskilling programs to equip workers with the necessary skills to adapt to the changing job market. By providing training in emerging fields, such as data analysis, cybersecurity and AI-related technologies, workers can enhance their employability and reduce the risk of job displacement.

Privacy and Surveillance

- Data Collection and Surveillance Capabilities:

- With the proliferation of AI technologies, there has been a significant increase in data collection capabilities, as AI systems rely on vast amounts of data to learn and make predictions.

- Surveillance systems powered by AI, such as facial recognition technology and smart surveillance cameras, can continuously monitor and analyze individuals’ activities, leading to a massive influx of personal information.

- Risks to Personal Privacy and Civil Liberties:

- The extensive collection and analysis of personal data by AI systems raise concerns about eroding privacy rights and civil liberties.

- Aggregating personal data from various sources can create comprehensive profiles, allowing for invasive surveillance, targeted advertising and potential misuse of sensitive information.

- Benefits of AI with Privacy Concerns:

- Striking a balance between the benefits of AI and individuals’ right to privacy is crucial. While AI technologies can bring improvements in various domains, including healthcare and security, ethical considerations should be integrated into their development and deployment processes.

- Safeguarding privacy through data anonymization, informed consent and strict access controls can help mitigate the potential risks associated with AI-enabled surveillance and data collection.

Autonomous Weapons and Warfare

- Development and Deployment of AI-powered Weapons:

- The advancement of AI has enabled the development of autonomous weapons systems that can operate without direct human control.

- These weapons include unmanned drones, autonomous combat vehicles and automated defense systems, which can make decisions and carry out actions independently.

- Ethical Concerns in Autonomous Warfare:

- Autonomous weapons raise concerns about accountability and the potential for unpredictable outcomes. In addition, the lack of human intervention in decision-making can lead to unintended harm, civilian casualties and violations of ethical principles.

- Ethical dilemmas arise regarding proportionality, adherence to international humanitarian laws and the ability to assess the intent and consequences of autonomous actions in dynamic and complex war scenarios.

- International Regulations and Governance:

- Recognizing the ethical challenges of autonomous weapons is crucial; there is a growing consensus on the importance of establishing international regulations and governance frameworks.

- Collaborative efforts among nations, organizations and experts are necessary to develop guidelines and standards that ensure the responsible development, deployment and use of AI-powered weapons, focusing on minimizing harm, upholding human rights and adhering to international laws and norms.

Rise of AI-generated Fake Content

- Deepfake Technology

- Deepfake technology is multimedia content generated by AI, such as videos, images and audio, that appear convincingly natural but are manipulated or fabricated.

- The advancement of AI algorithms and deep learning techniques has made it easier to create sophisticated deep fakes, raising concerns about their potential misuse.

- Spread of Misinformation

- Deepfakes have the potential to spread misinformation at an unprecedented scale. In addition, they can be used to manipulate public opinion, deceive individuals and undermine trust in visual and audio evidence.

- Circulating AI-generated fake content poses significant challenges to media credibility, democratic processes and social cohesion.

- Countermeasures and Detection Techniques

- Researchers and tech companies are actively developing techniques to detect and mitigate the impact of deep fakes as they fear its widespread misuse.

- Methods such as forensic analysis, watermarking and AI-based algorithms are being explored to identify and authenticate genuine content and raise awareness about the existence and implications of deep fakes.

Superintelligence and Existential Risks

- Existential Risks and the Control Problem:

- As AI systems become increasingly advanced, there are concerns about the control problem – the challenge of ensuring that superintelligent AI systems act in accordance with human values and goals.

- The potential risks include unintended consequences, decision-making processes beyond human comprehension and the potential for AI to act against humanity’s best interests.

- Ensuring AI Systems Align with Human Values:

- Safeguarding against existential risks involves developing strategies to align AI systems with human values and to ensure human oversight and control.

- Research efforts focus on developing AI safety measures, value alignment techniques and governance frameworks to address superintelligent AI’s ethical and existential concerns.

Moreover, you should check out “Why Google’s ‘Godfather of AI’ Resigned and What It Means for the Future of Technology” to gain further insights.

How Can We Mitigate Risks and Dangers Related to AI?

Transparency, explainability and accountability in AI systems are crucial aspects of ethical development. AI algorithms should be designed to provide transparent and interpretable results, allowing users to understand the decision-making process. This transparency helps build trust and enables users to identify any biases or errors in the system. Additionally, incorporating mechanisms for accountability, such as clear lines of responsibility and oversight, ensures that developers and organizations are held responsible for the actions and consequences of their AI systems.

To avoid bias and discrimination, it is essential to incorporate diversity and inclusivity in AI teams. By bringing together diverse perspectives and expertise, AI development teams can reduce the risks of algorithmic biases and ensure that AI systems are designed to cater to the needs of a wide range of users. Inclusivity also helps address potential blind spots and ensures that the benefits of AI are accessible to all.

Moreover, ethical guidelines and frameworks for AI development are crucial for setting industry standards and promoting responsible practices. These guidelines should address issues such as privacy, data protection, consent and the impact of AI on society. They provide a framework for developers and organizations to navigate the ethical complexities of AI and make informed decisions throughout the development lifecycle.

Implications in the Tech Industry:

- Fostering trust and enhancing reputation: Organizations prioritizing responsible AI use by adhering to ethical practices build trust with users. Whereas users who perceive AI technologies as fair, transparent and accountable are more likely to adopt them.

- Mitigating legal and reputational risks: Ethical AI development practices help organizations mitigate the legal and reputational risks associated with biased or discriminatory AI systems. By prioritizing ethics, organizations can avoid legal consequences and public backlash.

- Improving user satisfaction and engagement: Ethical AI systems that consider diversity and inclusivity are designed to cater to a broader range of users. This leads to increased user satisfaction and engagement with AI technologies.

- Promoting innovation and creativity: Ethical AI practices encourage organizations to think critically about their technologies’ social and ethical implications. This fosters innovation by pushing for the development of AI systems that prioritize ethical considerations and address real-world challenges.

- Ensuring long-term sustainability: By incorporating ethical AI practices, organizations contribute to the long-term sustainability of the AI industry. Responsible use of AI promotes public confidence, reduces societal concerns and supports the development of a thriving and socially beneficial AI ecosystem.

Companies such as Google have developed their own AI principles, outlining commitments to fairness, privacy and accountability. For example, Google’s AI principles explicitly state that their AI technologies will be used for beneficial purposes and will not cause harm or be used for surveillance, violating internationally accepted norms. This commitment demonstrates their dedication to responsible AI development and use.

Embracing Ethical AI: Shaping a Responsible and Beneficial Future

It is undeniable that AI has the potential to revolutionize our world across various sectors. However, with this immense power comes the responsibility to use AI ethically and with guidelines in place.

Especially tech professionals have an immense potential to bring out the positive impact of AI. For instance, developers bear a significant responsibility for shaping the future of AI. They are responsible for creating AI systems that align with ethical standards and societal values. As stewards of this transformative technology, developers must proactively consider the potential consequences of their creations.

This sense of responsibility of adhering to ethical AI development practices comes with a reliable tech professional, which requires an extensive recruitment process. This is where Remotebase can play a valuable role.

Remotebase is a trusted platform that connects businesses with top-tier remote tech professionals who deeply understand AI technologies and the importance of ethical AI practices. By leveraging Remotebase, organizations can access a pool of talented professionals with a 2-week free trial and no upfront charges. The top talent recruited by Remotebase professionals is thoroughly vetted for their technical expertise, experience, and alignment with ethical AI principles.

FAQs

1. Are there any regulations governing the use of AI?

Different countries and regions have started implementing rules to control the use of AI. These regulations may address data protection, algorithmic transparency, safety standards and ethical considerations in AI development and deployment.

2. What is the role of AI in combating misinformation?

While AI can be used to generate fake content, it can also be utilized to detect and combat misinformation. AI-powered algorithms can analyze large datasets to identify patterns of misinformation and assist in fact-checking processes.

3. Can AI contribute to job displacement?

AI has the potential to automate specific tasks, which can lead to job displacement in certain industries. However, it also creates new job opportunities and has the potential to enhance productivity and drive economic growth.